Mastering Edge AI Vision: NPU-Accelerated Inference with TinyML and Industrial Gateways

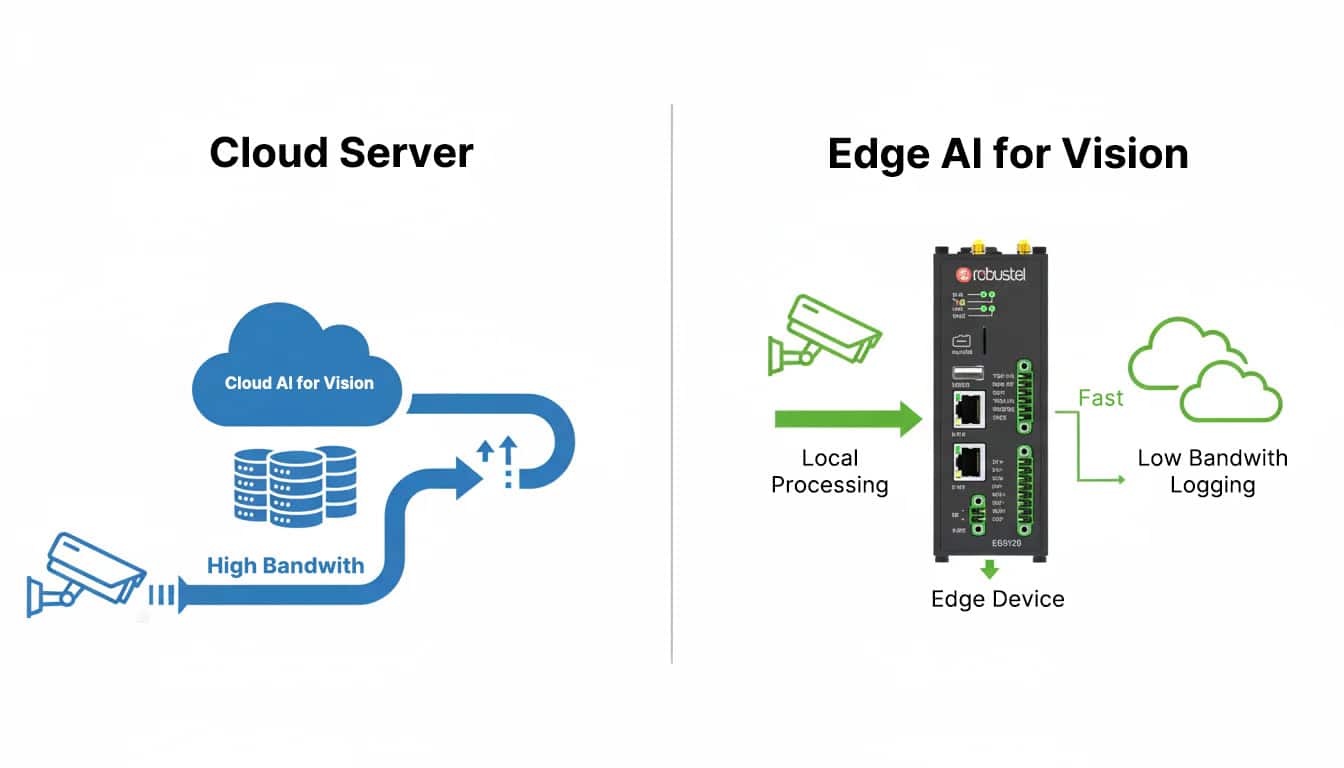

The era of “streaming all video to the cloud” is ending. For modern industrial automation and smart infrastructure, the future belongs to On-device Inference. This guide serves as a technical roadmap for engineers and AI architects looking to transition from cloud-dependent visual monitoring to autonomous, real-time Edge AI Vision.

We dive deep into the hardware-software synergy required to move your computer vision projects from a lab-bound proof-of-concept to a scalable, production-ready reality.

What You’ll Master in This Guide:

- The Intelligence Spectrum: Understanding the critical boundary between TinyML (MCU-level) and Edge AI (Gateway-level) for high-resolution vision.

- Hardware Efficiency: Why a standard CPU fails at deep learning, and how a dedicated Neural Processing Unit (NPU)—like the 2.3 TOPS engine in the Robustel EG5120—enables millisecond-level inference.

- The “Edge-to-Action” Workflow: A practical blueprint for integrating IP cameras, Dockerized AI models (TensorFlow Lite/ONNX), and industrial I/O for instant physical response.

- Data Gravity & Bandwidth Optimization: How local processing slashes cloud costs and latency by shifting from raw video streams to lightweight MQTT-based metadata reporting.

Introduction: The Intelligent “Eyes” at the Industrial Edge

In my conversations with operations managers across various sectors, one goal consistently tops their list: the desire to truly “see” and interpret their remote operations in real-time. Whether it’s identifying a microscopic defect on a high-speed conveyor, managing site security via facial recognition, or automating plate reading in a logistics hub, the demand for visual intelligence is surging. For years, the industry’s only solution was to stream gigabytes of raw video data to the cloud for analysis. However, as many found out the hard way, this “cloud-first” approach is often too slow, too expensive, and prone to connectivity failures.

But the paradigm is shifting. What if your on-site gateway didn’t just capture images, but actually understood them?

This is where TinyML and Edge AI for Vision are rewriting the rules of industrial automation. By performing on-device inference—deploying AI models directly onto the hardware at the network’s edge—we can now execute complex object detection and image classification with millisecond-level latency. This isn’t a future-concept from a lab; it’s a ready-to-deploy technology that is rapidly becoming a mandatory feature for any professional Industrial IoT Edge Gateway. Choosing the right hardware is no longer just about connectivity; it’s about the processing power required to make split-second decisions where the action happens.

Defining the Scope—TinyML vs. Edge AI for Vision

While the industry often groups them together, TinyML and Edge AI represent two distinct scales of intelligence. Understanding where your project sits is the first step in hardware selection.

- TinyML (Intelligence at the Sensor Level): This refers to running highly optimized, lightweight models on resource-constrained hardware like Microcontrollers (MCUs). In the world of vision, TinyML is typically used for “Binary Tasks”—simple triggers like detecting if a person is present or if a box is on a conveyor belt. It’s ultra-low power but limited in complexity.

- Edge AI (Vision at the Gateway Level): This is where serious industrial automation happens. Edge AI utilizes more powerful Microprocessors (MPUs), often paired with a dedicated NPU (Neural Processing Unit) or AI accelerator. This setup allows for the real-time analysis of high-resolution video streams, multi-object tracking, and granular defect inspection.

For any professional Industrial Machine Vision application, you are almost certainly moving into the realm of Edge AI. To handle these sophisticated inference workloads without bottlenecking your operations, you need a device that balances raw compute power with thermal efficiency.

The Engine of Edge Vision—Why an NPU is Non-Negotiable

To execute Edge AI for computer vision at industrial speeds, a standard CPU simply isn’t enough. It lacks the parallel processing architecture required for deep learning. You need a gateway equipped with a Neural Processing Unit (NPU)—a specialized processor engineered specifically to accelerate the complex mathematical tensors used in AI models.

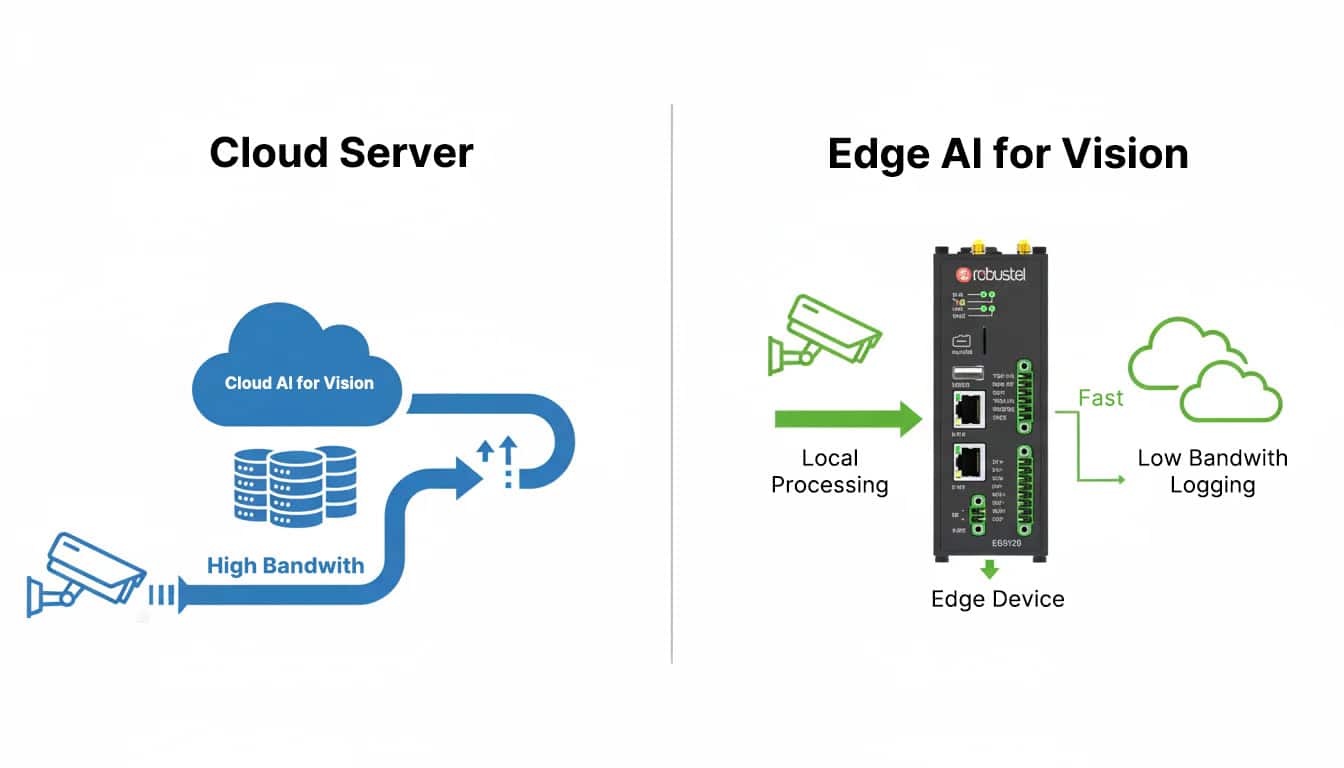

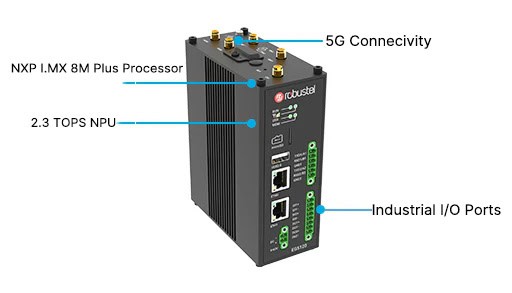

The Robustel EG5120 was purpose-built to fill this high-performance gap. It moves beyond traditional routing to become a true “Edge Compute Hub” through three critical features:

- NXP i.MX 8M Plus Intelligence: While its quad-core ARM processor handles general system tasks, the EG5120’s “secret weapon” is its integrated 2.3 TOPS NPU. This dedicated silicon allows the gateway to perform trillions of AI calculations per second with remarkable energy efficiency, ensuring high-speed inference without overheating in harsh environments.

- A “Developer-First” Software Stack: Running RobustOS Pro (built on a hardened Debian 11 kernel), the EG5120 provides a familiar, stable Linux environment. This open architecture allows developers to seamlessly deploy industry-standard AI frameworks like TensorFlow Lite, PyTorch, and ONNX, significantly shortening the time-to-market for custom vision models.

- Industrial I/O & Low-Latency Connectivity: Vision is useless without action. With 5G/4G backhaul, Gigabit Ethernet, and industrial interfaces (RS485, DI/DO), the EG5120 acts as the bridge between the digital and physical worlds. It can ingest high-resolution video streams from local cameras and instantly trigger an Edge-to-Action response—such as signalizing a robotic arm to divert a defective item in milliseconds.

Edge AI in Action—Transforming Quality Control

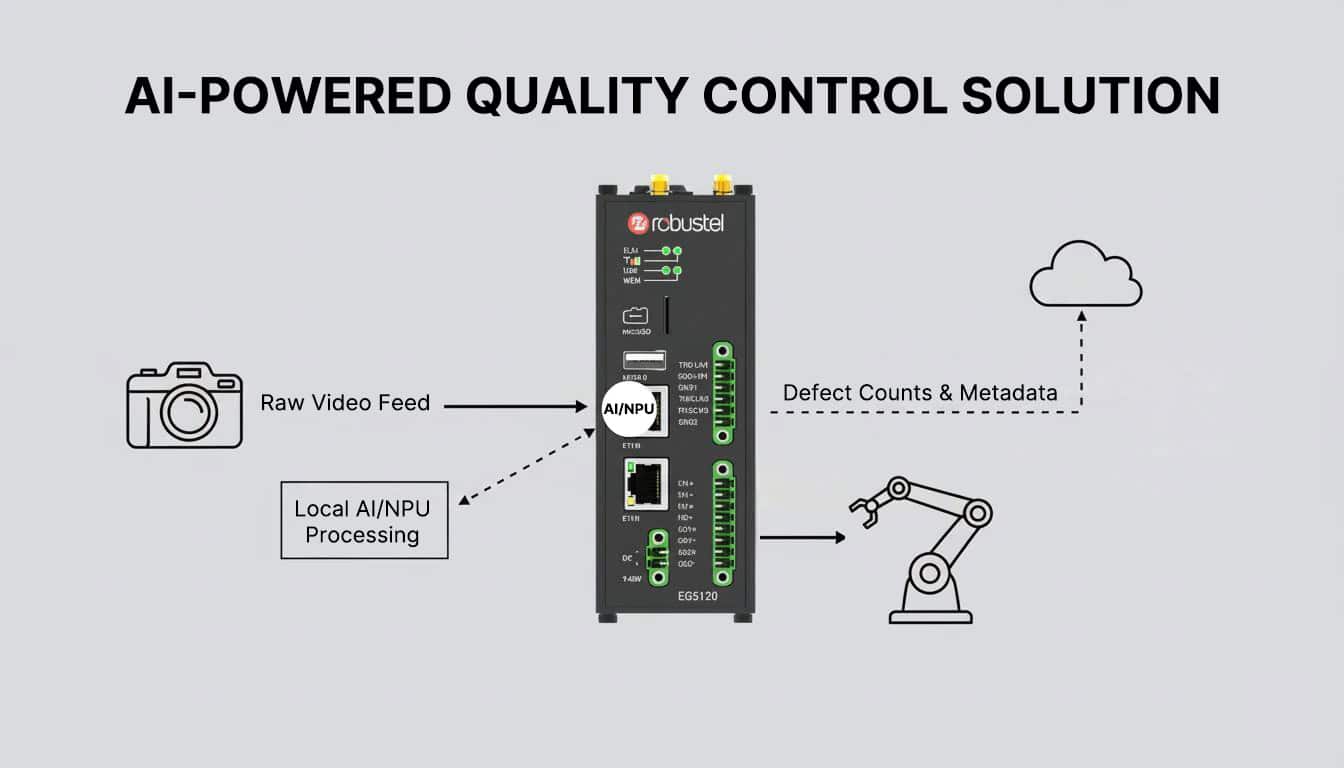

To truly appreciate the power of an NPU-accelerated gateway, let’s look at a concrete example: Automated Quality Control in a high-speed bottling plant. This is where the EG5120 moves from being a “router” to an indispensable part of the production line.

The Workflow: From Vision to Verdict

- The Vision Setup: A high-definition IP camera is positioned over the conveyor belt, feeding a raw RTSP video stream directly into the EG5120’s Gigabit Ethernet port.

- The Containerized Model: Using Docker, a lightweight object detection model (optimized via TensorFlow Lite) is deployed onto the gateway. The model is pre-trained to distinguish between a “sealed bottle” and a “missing cap.”

- On-Device Inference: This is the “Aha!” moment. The EG5120’s NPU processes every single video frame in real-time. This complex neural network analysis happens in milliseconds right on the factory floor—not in a distant data center.

- Immediate Physical Action: The millisecond a defect is detected, the EG5120 triggers a Digital Output (DO) signal. This pulse activates a pneumatic kicker that physically removes the faulty bottle from the line before it can reach packaging.

- Data-Efficient Reporting: Instead of choking the factory’s bandwidth by uploading hours of raw video, the gateway sends a tiny MQTT message—e.g., {“defect_id”: 102, “status”: “rejected”}—to the cloud for high-level analytics and OEE (Overall Equipment Effectiveness) reporting.

This workflow is the definition of Edge AI for Vision: it is localized, lightning-fast, and minimizes data gravity, ensuring your production line never stops for a “cloud timeout.”

Conclusion: Scaling the Future of Industrial Intelligence

The fusion of TinyML and Edge AI for Vision is doing more than just adding “eyes” to the Industrial IoT; it is unlocking a new tier of autonomous decision-making for smart infrastructure. While TinyML serves as an excellent entry point for simple, ultra-low-power sensing, true Industrial Machine Vision—the kind that drives OEE and ensures zero-defect manufacturing—demands the high-performance muscle of Edge AI.

Success in this field isn’t found in a lab-based proof-of-concept; it’s found in the transition to a production-ready platform. A successful deployment hinges on hardware that balances three critical pillars:

- Raw Processing Power: Sufficient NPU-accelerated compute to handle high-resolution inference.

- Operational Ruggedness: The ability to maintain peak performance in high-vibration, temperature-volatile environments.

- Deployment Flexibility: An open software stack that scales with your evolving AI models.

By leveraging an NPU-equipped edge gateway like the Robustel EG5120, you aren’t just buying a device—you’re securing the reliability and scalability needed to transform ambitious AI visions into a resilient, real-world reality.

よくある質問

Q1: What does TOPS mean for an NPU?

A1: TOPS stands for “Trillions of Operations Per Second.” It’s a measure of the raw processing power of an AI accelerator. A higher TOPS number generally means the device can run more complex AI models or process data (like video frames) at a faster rate.

Q2: Do I need to be an AI expert to deploy a vision model on the EG5120?

A2: Not necessarily. While model training requires data science skills, deploying a pre-trained model (e.g., from TensorFlow Hub) on the EG5120 is a more straightforward process for a developer familiar with Linux and Docker. The NXP eIQ™ toolkit also provides tools to simplify the process of converting and optimizing models for the NPU.

Q3: Can the EG5120 connect to standard industrial cameras?

A3: Yes. The EG5120’s Gigabit Ethernet ports allow it to connect directly to any standard IP camera. For cameras with a serial interface, its RS232/RS485 ports can be used. This flexibility makes it an ideal edge gateway with an NPU for retrofitting intelligence into existing camera systems.

About the Author

ロバート・リャオ |テクニカルサポートエンジニア

Robert is an IoT Technical Support Engineer at Robustel, specializing in industrial networking and edge connectivity. A certified Networking Engineer, Robert focuses on the deployment and troubleshooting of large-scale IIoT infrastructures. His work centers on architecting reliable, scalable system performance for complex industrial applications, bridging the gap between field hardware and cloud-side data management